On-Device AI: A Structural Shift in the Making?

Recent developments from Nvidia and Tesla/xAI point toward a potential transition in artificial intelligence: from centralized, cloud-based systems to intelligence embedded directly on devices. The technology is advancing. The key question is whether adoption will follow.

Introduction

Over the past two weeks, two announcements have drawn attention.

Nvidia introduced NemoClaw, an enterprise-oriented stack designed to support deployment of AI agents across environments. Around the same time, Elon Musk highlighted Macrohard, also referred to as Digital Optimus, a joint Tesla-xAI effort focused on building AI systems that can execute tasks across software environments.

One received significant coverage. The other less so. Both are worth examining. Taken together, they point to a broader shift - from AI accessed through centralized infrastructure to AI embedded within devices, workflows and systems.

Two announcements. Two different approaches. One broader direction

From Cloud AI to Embedded Intelligence

The dominant AI model today remains cloud-centric. Models are hosted centrally and accessed through applications that depend on continuous connectivity. On-device or edge-based AI introduces an alternative.

Intelligence can increasingly reside closer to the user or system, reducing reliance on remote infrastructure. This changes both performance characteristics and system design.

Potential advantages

- Lower latency through local execution

- Greater control over data boundaries

- Reduced dependence on centralized compute

- Increased resilience in constrained environments

Emerging challenges

- Security becomes distributed rather than centralized

- Governance becomes more complex across endpoints

- Misuse risks increase as capabilities become more accessible

Decentralization improves capability, but also increases system complexity.

Why This Could Matter Strategically

The significance of these developments is structural rather than incremental. AI is moving beyond models toward systems: the integration of model, runtime, hardware and workflow.

"A useful way to think about this is through the idea of an appliance."

An appliance is a complete system designed to perform a function reliably with minimal user intervention. Applied to AI, this implies tightly integrated solutions where intelligence is embedded and operational rather than accessed on demand. This framing aligns with broader industry patterns where value accrues not only to the model itself, but to the integration of the full stack.

The Enterprise Adoption Question

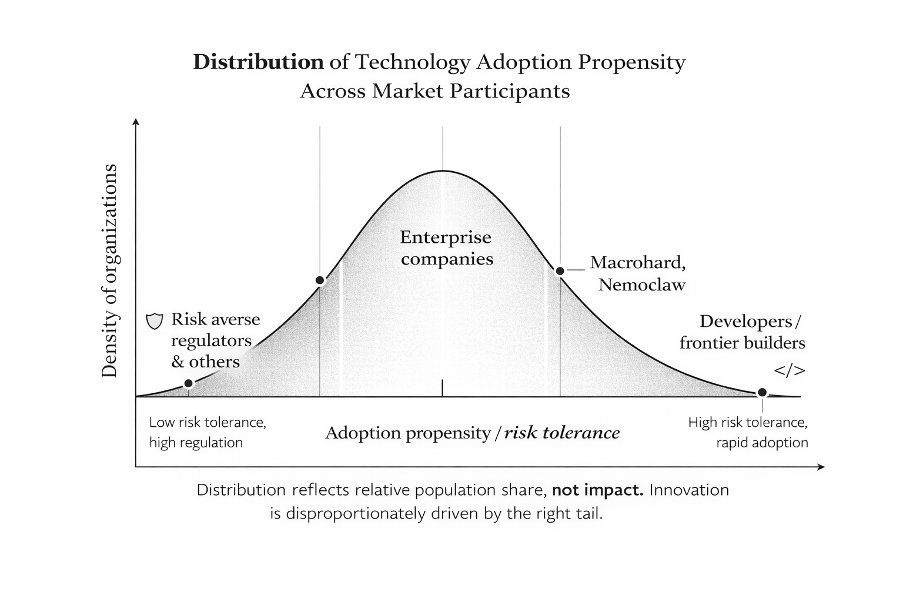

The key determinant of impact will not be experimentation at the frontier, but adoption at scale. Developers and early adopters will move quickly. Regulators will adapt more slowly.

"The decisive segment sits in the middle - the enterprise."

Banks, insurers, manufacturers, logistics providers and retailers form the operational core of the economy. If they adopt a technology, it scales. If they do not, it remains limited.

Current enterprise adoption of AI remains uneven, shaped by familiar constraints:

- trust and reliability requirements

- security and compliance concerns

- fragmented or inaccessible data

- legacy infrastructure

- organizational inertia

On-device AI may address some technical barriers, particularly around latency and control. It does not eliminate organizational resistance. Technology can reduce friction. It does not remove hesitation.

What to Watch

Three questions will shape how this evolves:

1. Enterprise readiness

Will on-device systems meet the reliability, auditability and control standards required at scale?

2. Economic positioning

Who captures value as AI shifts from models to integrated systems?

3. Market transition

Does this remain concentrated among early adopters or extend into mainstream enterprise deployment?

Conclusion

Nemo Claw and Macrohard may evolve significantly from their current form. Their importance lies less in the specific implementations and more in the direction they indicate. AI appears to be moving from centralized access toward embedded capability.

In that shift, agents are likely to become the operational layer of AI systems, moving from assistants to task-executing digital workers embedded within products and devices. This suggests that the competitive frontier may shift from building better models to deploying integrated systems where models, agents and execution environments are tightly coupled.

If that trajectory continues, the next phase of the market will be defined not only by better models, but by better systems.

And as with previous shifts, the outcome will depend on adoption in the middle of the curve.

--

References

- Nvidia Newsroom: NemoClaw announcement

- Reuters: Tesla/xAI Macrohard reporting (March 2026)

- Ars Technica: Nvidia and OpenClaw context

- TechCrunch: Nvidia OpenClaw enterprise implications

-

-